The Alignment Desk

A writing accelerator for AI safety

A writing accelerator for AI safety

Applications Closed

Applications for Easter Term 2026 are now closed. Register your interest for the next round.

The Alignment Desk is a structured writing programme for people in Cambridge with existing exposure to AI safety who want to produce and publish serious work.

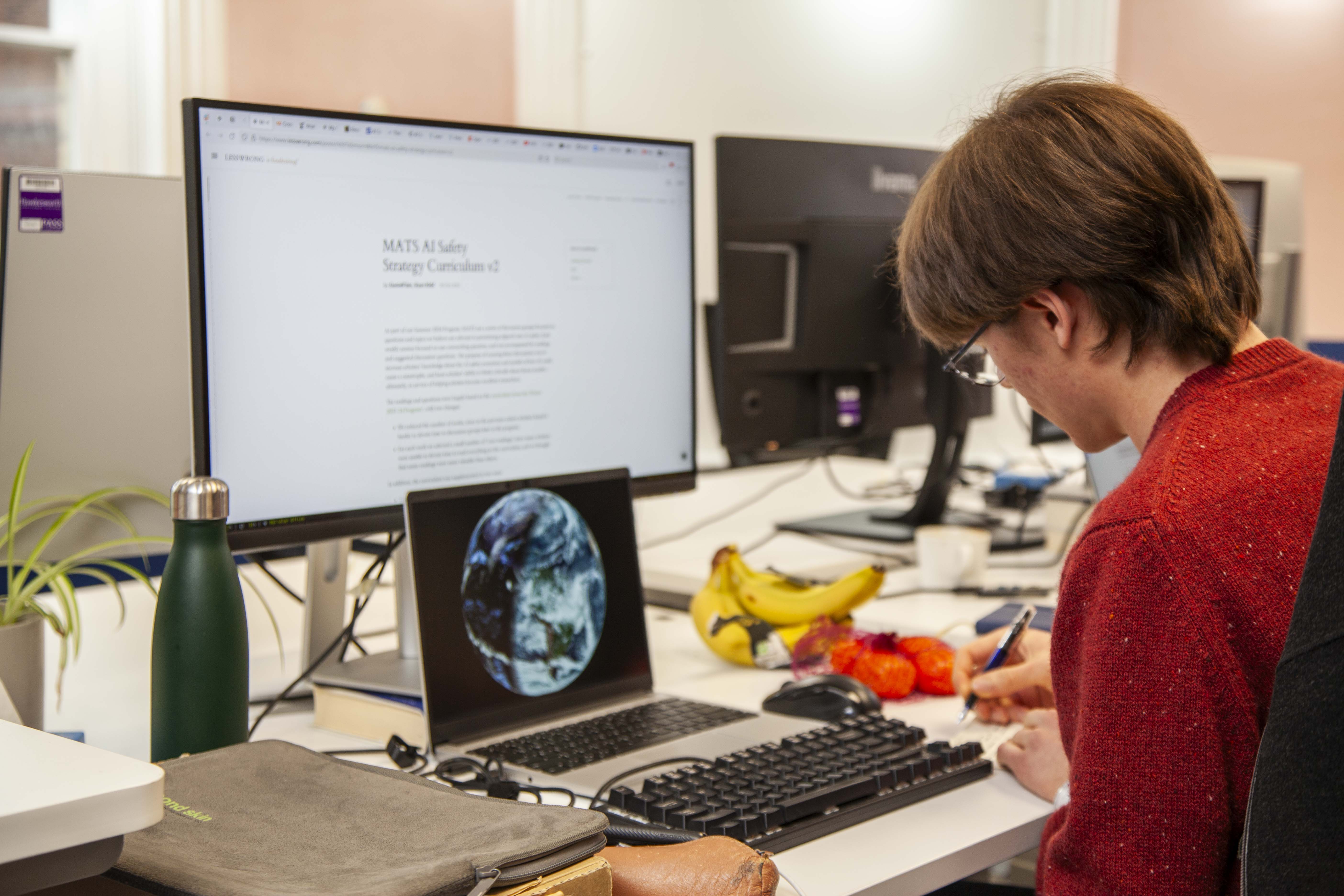

A small cohort meets weekly at the Meridian Office. Participants work on their own projects, set weekly goals, and hold each other accountable. Each participant is expected to publish three pieces during term.

MPhil student in Global Risk & Resilience at the Centre for the Study of Existential Risk. Previously a Technical Writer at Schneider Electric and Junior Researcher at the European Institute of Asian Studies. Bylines in East Asia Forum, EIAS and APEC features.

MPhil student in the Ethics of AI, Data and Algorithms at the University of Cambridge. Background in technology ethics and moral philosophy, with experience as an AI Analyst. Specialises in predictive AI, surveillance tech, fairness metrics, and AI governance.

Third year undergrad engineer at Cambridge. Research interests include value alignment and how goal-directed behavior emerges from RL. Helping out at CAISH since 2023, working on operations. Previously worked at Amazon doing software engineering, the Singapore AI Safety Institute researching hallucination detection, and a bit of singular learning theory.

2nd year Engineering student at Cambridge, currently exploring LLM finetuning and generalisation under a SPAR project. Interested in investigating technical solutions through a pragmatic lens through Alignment Desk. Background in robotics and software engineering.

Writing forces you to confront gaps in your understanding that remain hidden when you are just reading or thinking. It is also how people in this community come to know your work.

For many aspiring writers, the biggest challenge isn't a shortage of ideas, but turning those ideas into finished pieces. Developing a habit of regular output is often what makes the difference between wanting to contribute and actually doing so.

"Writing is the single biggest difference-maker between reading a lot and efficiently developing real views on important topics."

Holden Karnofsky, Learning By Writing

The Alignment Desk is best suited to people who already have context in AI safety, whether technical or governance, and want to develop and publish ideas relevant to the field. This may include people aiming for research, policy, operations, communications, or other AI safety adjacent roles.

Weekly Saturday sessions at the Meridian Office. Quiet, structured, and distraction-light. Whiteboards are available in separate rooms for collaboration.

You commit to a project at the start of term and report progress each week. The cohort is small, and progress is visible.

During breaks, participants can sanity-check arguments, get quick red-teams, or talk through ideas that are stuck.

Where useful, we can help connect participants with researchers, policy professionals, or others in the Cambridge AI safety community. We will also invite relevant experts into the office to give feedback on drafts.

Three published pieces during term. Imperfect and published beats perfect and private.

We are flexible on format. What matters is that you have a concrete output in mind. Examples include:

Applications are currently closed. Register your interest to hear when applications open next.